Image SEO in 2026 is defined by three shifts most optimization guides have not caught up with: next-gen formats crossing the adoption threshold, visual search becoming a primary discovery channel, and structured data giving publishers direct control over how Google selects and credits images. Google Lens now processes over 20 billion visual searches per month, and Circle to Search lets users query any image on their screen without typing. The gap between sites still serving unoptimized JPEGs and those running AVIF with full ImageObject schema is measurable in LCP scores, Discover CTR, and AI citation rates. The fundamentals still apply, but the competitive edge in image SEO 2026 belongs to teams that understand the new mechanisms.

Key Takeaways

- AVIF reached the tipping point. Browser support hit 93.8% in 2025, and AVIF files are roughly 50% smaller than JPEG at equivalent quality. It is the default format for 2026.

- Visual search is a primary channel. Google Lens handles 20 billion monthly searches. Images are discovery entry points, not decorations.

- ImageObject schema expanded. New creditText, copyrightNotice, and creator properties give publishers control over image attribution in traditional and AI search.

- Discover CTR depends on image specs. Pages with 1200px+ images and max-image-preview:large see 45% higher click-through rates.

- Multimodal content wins AI citations. Pages combining text, images, and structured data see up to 317% more AI Overview citations.

The Goal of Modern Image SEO

Image SEO involves the structural optimization of visuals to ensure they are machine-readable and performant. In 2026, the goal is no longer just “ranking in Google Images.” It is about providing the visual evidence AI models need for source validation and the high-speed infrastructure required for visual discovery. By optimizing images, you improve organic visibility, meet accessibility standards for screen readers, and reduce bounce rates by ensuring a seamless, fast-loading user experience.

What Changed in Image SEO Between 2025 and 2026?

Three developments redefined image optimization in this cycle: AVIF format adoption crossed the mainstream threshold, visual search scaled to a primary discovery channel, and Google gave publishers structured data tools to control image selection and attribution. Each change affects a different layer of the stack, from file delivery to schema markup.

The fundamentals have not moved. Alt text, file naming, compression, lazy loading, and image sitemaps work exactly as before.

What Stayed the Same? The Mechanics of Accessibility and Context.

While AI-driven discovery has evolved, search engines still rely on textual cues to understand visual content. Adding descriptive alt text remains non-negotiable; it supports screen readers for visually impaired users and provides an extra layer of context that reinforces the topic of your page. Furthermore, using captions thoughtfully can improve user comprehension and engagement, signalling to search algorithms that the content is high-quality.

Strategic File Naming: Avoid generic names like IMG001.jpg; instead, use descriptive, keyword-rich names like modern-wooden-dining-table.jpg to provide search engines with immediate context.

The “Pro” Alt-Text Framework: Alt text must be descriptive, accurate, and concise (aiming for under 125 characters). It serves three purposes: accessibility for visually impaired users, indexing for Google/Bing, and as a fallback if the image fails to load.

| What Changed (2025-2026) | What Stayed the Same |

| AVIF as default serving format | Alt text best practices |

| Google Lens and Circle to Search at Scale | File naming conventions |

| ImageObject schema expansion (creditText, copyrightNotice, creator) | Compression principles |

| Preferred image metadata controls (primaryImageOfPage, og:image) | Image sitemap fundamentals |

| Discover image requirements formalized | Lazy loading implementation |

| AI Overviews multimodal citation signals | CDN delivery basics |

Why Does AVIF Matter for Image SEO Now?

AVIF delivers files approximately 50% smaller than JPEG at equivalent visual quality, and browser support reached 93.8% in 2025. That combination removes both the performance concern and the compatibility barrier that held back adoption.

The impact on Core Web Vitals is direct. On 73% of mobile pages, the Largest Contentful Paint element is an image. Smaller files mean faster LCP. AVIF does not improve rankings as a format signal, but LCP is a confirmed ranking factor, and AVIF is the fastest path to improving it for image-heavy pages.

| Format | File Size vs. JPEG (equivalent quality) | Browser Support (2025) |

| AVIF | ~50% smaller | ~93.8% |

| WebP | ~25-35% smaller | ~95.3% |

| JPEG | Baseline | Universal |

WebP alone is no longer sufficient. AVIF produces files 20-25% smaller than WebP at similar quality. Sites already serving WebP gain measurable compression improvement by adding AVIF as the primary source.

The delivery mechanism is the HTML `<picture>` element. It handles format negotiation (AVIF first, WebP fallback, JPEG final) and resolution negotiation (device-appropriate sizes via `srcset` and `sizes`). Without `<picture>`, publishers cannot serve AVIF while maintaining backward compatibility.

Adobe integrated native AVIF export into Photoshop in 2025, removing the last major tooling barrier.

Technical Tips to Boost Image SEO

Beyond selecting next-gen formats like AVIF, proper infrastructure is required to manage “heavy” visual assets. Lazy loading should be used to defer the loading of offscreen images, preventing slow load times that increase bounce rates and negatively impact Core Web Vitals.

For businesses with global audiences, integrating a Content Delivery Network (CDN) ensures that these optimized images are delivered quickly regardless of the user’s location.

Lazy Loading can be implemented with:

- JavaScript libraries like LazyLoad.js

- Native HTML attributes: <img loading=”lazy” />

CDN Integration: Use Content Delivery Networks like Cloudflare or Imgix to ensure fast delivery regardless of the user’s physical location.

Image Sitemaps: Ensure your images are discoverable by using tools like Yoast SEO to generate dedicated image sitemaps for search engine crawling.

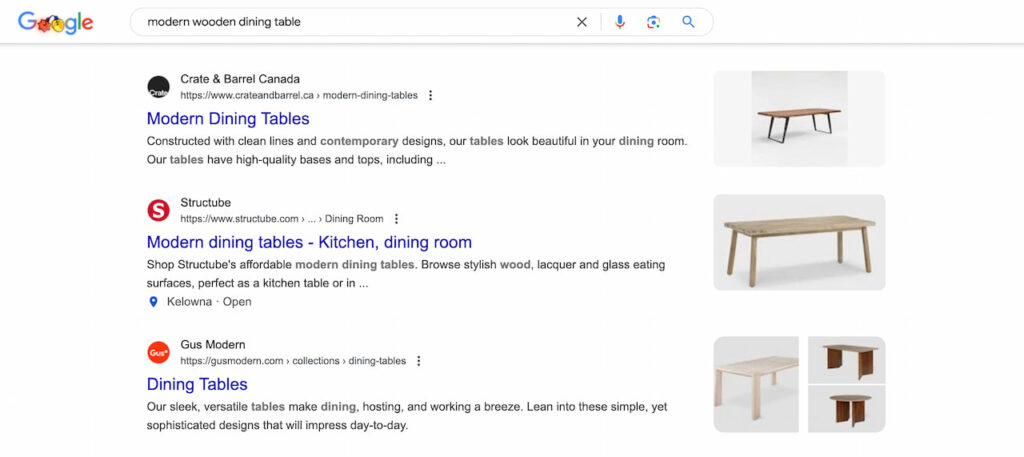

How Does Visual Search Change Image Optimization Strategy?

Google Lens processes over 20 billion visual searches per month as of early 2026, a 43% increase from 2024’s 14 billion monthly average. Image-based searches now represent approximately 26% of all Google queries. These are not supplementary interactions. They are a primary discovery channel operating independently of text-based search.

Circle to Search expanded that channel further. Google updated the feature in February 2026 to allow users to explore multiple items in a single image simultaneously. A user looking at a photo can circle a jacket, a watch, and a pair of shoes in the same gesture and get results for all three. No search bar required.

Gen Z starts 1 in 10 searches with Google Lens, and 20% of those carry commercial intent. Across shoppers, 62% prefer visual search for product discovery. The behavioural shift is concentrated in younger demographics but growing across all age groups.

What this requires from content teams: Every product image, infographic, and original visual asset is a potential organic entry point. Images need to be discoverable on their own merits, not just as supplements to page text. Clear subjects, high resolution, contextually relevant composition, and proper metadata are baseline requirements. Stock photography performs poorly in visual search because the same images appear across hundreds of sites. Original visuals create unique discovery paths.

Image optimization is no longer a subtask of page optimization. It is a parallel acquisition channel.

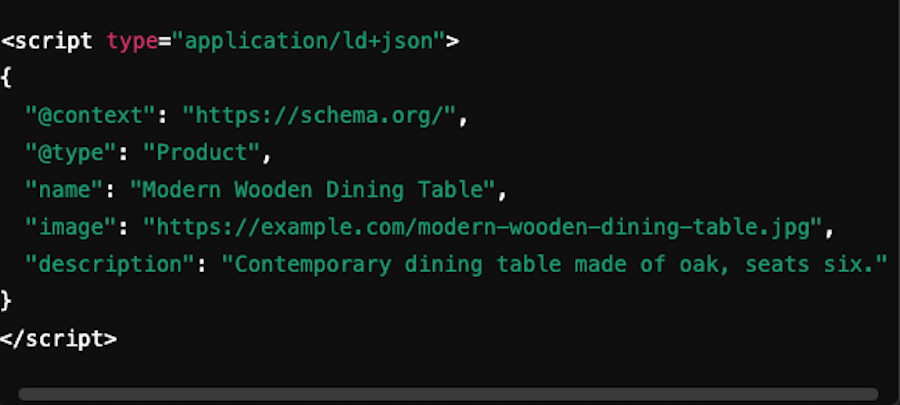

What Are the New ImageObject Schema Properties?

Google expanded ImageObject structured data with three properties that move attribution beyond the previous IPTC-only approach: creditText, copyrightNotice, and creator. These give publishers explicit, machine-readable control over how images are attributed in Google Images and AI-generated results.

- “creditText” identifies the person or organization associated with a published image.

- “copyrightNotice” describes the copyright terms.

- “creator” field specifies the image author using the same structure as the Author property for CreativeWork.

All three use JSON-LD, Google’s preferred structured data format.

The distinction between structured data and IPTC metadata matters. JSON-LD associates image metadata with the page on which the image appears. Each page using the same image needs its own markup. IPTC metadata is embedded in the image file and travels with it across pages. Both have a role: structured data controls the page-level signal, IPTC controls the file-level signal.

Google also added support for preferred image selection through metadata. The primaryImageOfPage property and the og:image meta tag both influence which thumbnail appears in search results and Discover. Previously, image selection was entirely algorithmic. Now publishers have explicit tools to control it.

As AI systems surface images in generated responses, proper attribution metadata determines whether Google credits the original source. Pages with complete ImageObject markup signal originality to both traditional and AI retrieval systems. Incomplete markup leaves attribution to algorithmic inference, which defaults toward larger domains.

How Do Image Specifications Affect Google Discover Performance?

Google Discover requires images at least 1200 pixels wide and the max-image-preview:large meta robots tag to display large image cards. Without both, Discover cannot show the visual previews that drive engagement.

Google recommends images exceeding 300,000 total pixels (a 1280×720 image at 16:9 produces 921,600 pixels, well above the threshold). The 16:9 aspect ratio optimizes for mobile display.

The CTR impact is substantial

Pages with 1200px+ images and “max-image-preview:large” see 45% higher click-through rates in Discover. Original images with clear subjects in 16:9 deliver a 79% higher CTR compared to stock photos or undersized images. Large previews increase CTR by 20-50% in regular search as well.

The “max-image-preview:large” tag is one of the highest-ROI technical changes a publisher can make. It requires a single line of HTML. Without it, Google cannot display large image previews, even if all other specifications are met.

Stock photography is a particular weakness for Discover. The same stock image across dozens of sites signals low originality. Custom screenshots, diagrams, branded infographics, and original photography consistently outperform stock imagery in both Discover CTR and visual search discovery.

How Do Images Affect AI Overview Citations?

Pages that combine text, images, and structured data see up to 317% more AI Overview citations than text-only pages with full schema integration. Without a complete schema, pages combining text with images and video still see 156% higher selection rates. Visual content is not an engagement metric here. It is a citation signal.

The mechanism is redundancy-based validation. When text claims align with visual evidence and both are tagged with structured data, AI systems assign higher confidence to the source. A comparison table rendered as an infographic, plus the same data in HTML, plus schema markup gives AI systems three signals to validate the same claim. Each additional format increases citation probability.

This connects directly to the ImageObject schema. Attribution metadata tells AI systems who created the visual content and under what terms. Pages with complete creditText, copyrightNotice, and creator properties provide the provenance signal AI retrieval systems use when selecting sources. Incomplete attribution forces the system to infer provenance, which defaults toward established domains.

For content teams, every data point or comparison should exist in at least two formats: text and visual. A comparison table rendered as both HTML and an image, with ImageObject schema on the image, creates the multimodal signal. The effort is minimal. The citation advantage is measurable.

Acquisition Through Clarity and Geography

Originality is a primary ranking signal for 2026; avoid stock imagery, which signals low value to both users and search engines.

- Geotagging for Local SEO: For location-specific businesses, embedding geocoordinates into images can enhance visibility in local search results.

- Product Clarity: For e-commerce, use multiple angles and consistent lighting to inspire purchase decisions while signaling quality to Google’s product crawlers.

- Thumbnail Optimization: Treat thumbnails as gateways; use high-contrast, eye-catching visuals that accurately reflect the content to drive higher engagement rates.

YouTube citations in AI Overviews for e-commerce queries have grown sharply in recent Semrush analyses, confirming that visual and video content is heavily weighted in commercial AI results.

Frequently Asked Questions About Image SEO in 2026

Does Switching to AVIF Format Improve Search Rankings?

AVIF does not directly affect rankings as a format signal. The benefit is indirect: AVIF files are roughly 50% smaller than JPEG, which improves Largest Contentful Paint scores. Since LCP is a Core Web Vitals metric and a confirmed ranking factor, faster image loading improves page performance scores.

Is Google Lens Traffic Visible in Google Analytics?

Google Lens traffic appears in Google Analytics under organic search, not as a separate channel. You cannot isolate Lens-specific sessions in GA4 with default reporting. Search Console shows some image search data, but does not break out Lens queries individually.

Do I Need ImageObject Schema on Every Page That Uses an Image?

Yes. Structured data associates metadata with the specific page where the image appears. If the same image is used on three different pages, each page needs its own ImageObject markup. IPTC metadata embedded in the image file travels with it automatically, but structured data does not.

What Is the Minimum Image Size for Google Discover?

Google requires images to be at least 1200 pixels wide for large image previews in Discover. Google also recommends images with a total pixel count exceeding 300,000. A 16:9 aspect ratio works best for mobile displays. The max-image-preview:large meta robots tag must also be present on the page.

Does Circle to Search Affect How I Should Optimize Product Images?

Circle to Search allows users to search for any image on their phone screen by circling or highlighting it. Product images should be high resolution, clearly show the product without clutter, and include relevant context. Images from social content and external sources can also trigger Circle to Search discovery back to the original source.

The shifts in image optimization are structural, not cosmetic. Format delivery, visual search, schema attribution, and AI citation signals all changed how Google evaluates and surfaces visual content. If your team is ready to update your image strategy for these new mechanisms, reach out to Blacksmith, and we will walk through what applies to your site.